YouTube is rolling out its advanced likeness detection technology to a pilot group of government officials, political candidates, and journalists to combat the rising threat of AI-generated deepfakes, the company announced on Tuesday. This initiative grants these public figures a direct mechanism to identify unauthorized AI content and request its removal if it violates platform policies.

Expanding the Shield Against AI Impersonation

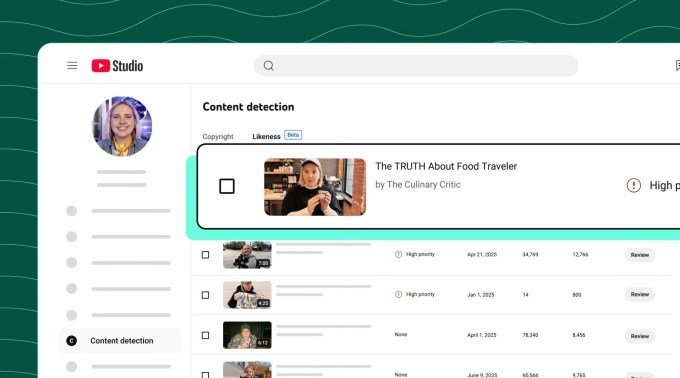

The technology, which debuted last year for roughly 4 million creators within the YouTube Partner Program, functions similarly to the platform’s Content ID system. Instead of scanning for copyrighted music or video, however, it flags simulated faces created by AI. These deepfakes are increasingly used to manipulate public perception by forcing notable figures to appear as though they are saying or doing things that never occurred in reality.

Leslie Miller, YouTube’s VP of Government Affairs and Public Policy, emphasized that the rollout is a strategic move to preserve the integrity of civic discourse. “We know that the risks of AI impersonation are particularly high for those in the civic space,” Miller stated during a press briefing. She noted that while the tool acts as a new protective layer, the company remains cautious to balance security with free expression.

Navigating Free Speech and Policy

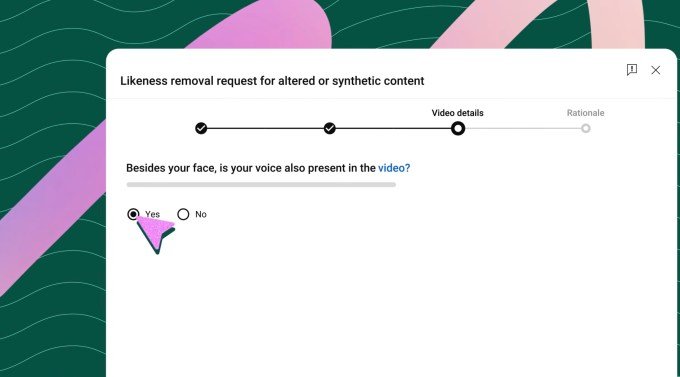

Not every request will result in an automatic takedown. YouTube intends to vet requests against its existing privacy guidelines to ensure that content deemed parody or political critique—protected forms of expression—remains online. Beyond internal tools, the company is also backing the NO FAKES Act in Washington, D.C., which seeks federal regulation regarding the unauthorized digital recreation of voices and likenesses.

To participate in the pilot, testers must verify their identity through a selfie and government-issued ID. Once set up, they can monitor matches and request removals. YouTube eventually plans to implement features that prevent violating content from ever being uploaded, or potentially allow rights holders to monetize such videos, mirroring the existing Content ID model.

Transparency in AI Labeling

YouTube continues to apply labels to AI-generated content, though placement varies based on the nature of the video. Content regarding sensitive topics receives more prominent labeling, while other AI-generated material may only see a disclosure in the description.

Amjad Hanif, VP of Creator Products, noted that the volume of removal requests thus far has been “very low,” as much of the AI content detected by creators has proven to be benign or even additive to their content strategy. However, the company acknowledges that the stakes are significantly higher when the subject matter involves government officials and journalists, and they intend to expand the technology to cover voices and other intellectual property in the future.