Conspiracy theories are sweeping social media, alleging that Israeli Prime Minister Benjamin Netanyahu has been replaced by AI-generated deepfakes following recent claims of his death or injury. From viral clips purportedly showing the leader with extra fingers to bizarre debates over how he holds a coffee cup, the current digital climate proves one thing: objective reality has become nearly impossible to verify.

The Anatomy of a Viral Conspiracy

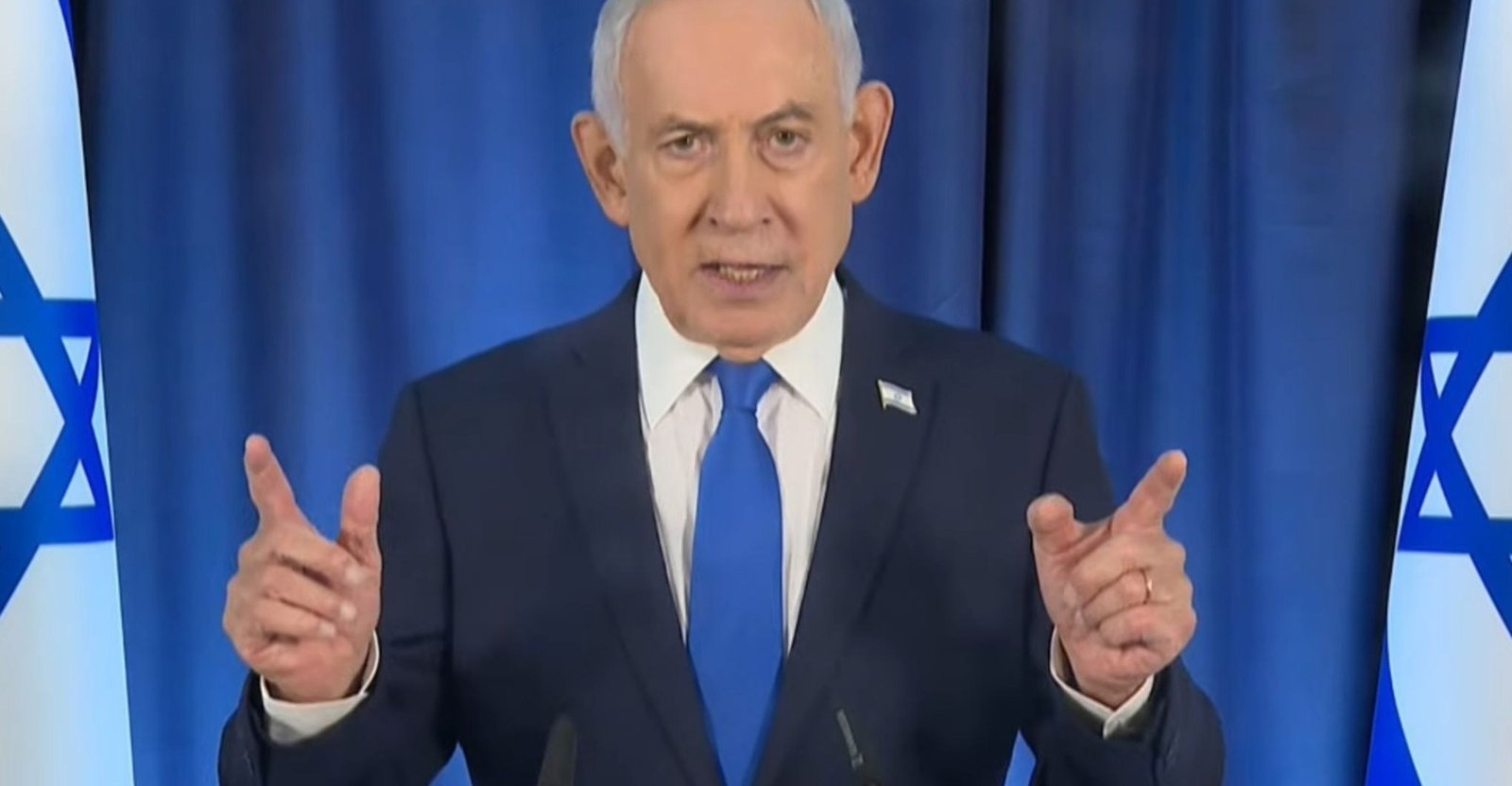

The speculation ignited after a livestreamed press conference hosted by Netanyahu last Friday. Social media users flooded platforms with claims that the footage displayed the Prime Minister with six fingers on his right hand—a common glitch in older generative AI models. These users suggested that Israel was utilizing deepfake technology to conceal the fact that Netanyahu had allegedly died during an Iranian missile strike.

However, independent fact-checkers, including Snopes and PolitiFact, have debunked these claims. The “extra” finger is easily attributed to video compression, low resolution, and poor lighting. Furthermore, the video’s 40-minute runtime far exceeds the capabilities of current AI video generation models, which typically struggle to maintain consistency over such extended periods.

The “Proof-of-Life” Backfire

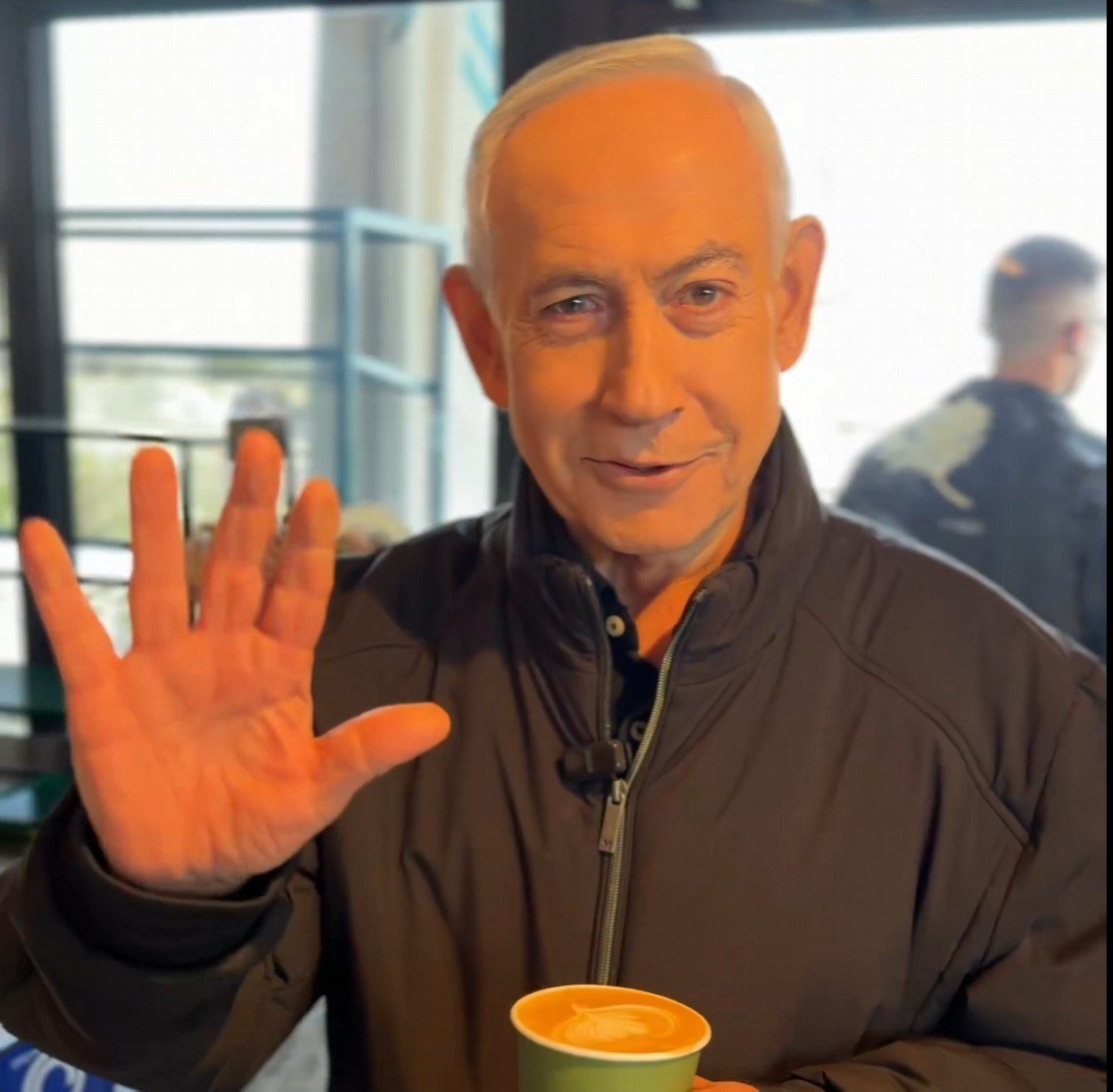

In a bid to silence the rumors, Netanyahu released a video on X showing him in a coffee shop, explicitly asking the camera operator to count his fingers. Instead of quelling the noise, the move backfired. Skeptics immediately dissected the footage, pointing to supposed visual inconsistencies, such as liquid moving unnaturally in his cup and his ring appearing to flicker in and out of existence.

Critics also scrutinized the background, noting that a cash register appeared to display a 2024 date, and questioned his choice of hand for holding the beverage. These observations, while largely circumstantial, highlight a deeper issue: when the public no longer trusts their own eyes, every minor detail becomes a potential smoking gun for a deeper conspiracy.

The Absence of Digital Verification

The core of the problem lies in a lack of technical accountability. Neither of the clips provided carries metadata from verification systems like C2PA Content Credentials or SynthID, which are designed to track the provenance of digital media. Despite pledges from platforms like YouTube and Instagram to label AI-manipulated content, neither clip received a definitive tag, leaving users trapped in a vacuum of uncertainty.

A Growing Crisis of Institutional Trust

This “proof-of-life” anxiety is being weaponized in the broader geopolitical conflict. On Sunday, President Donald Trump took to Truth Social to accuse Iran of using AI as a “disinformation weapon.” He went as far as calling for media outlets to be charged with treason for disseminating false information. However, this rhetoric arrives amidst a landscape where political actors—including the Trump administration itself—frequently utilize AI-generated memes and content to shape public perception.

As AI tools become increasingly sophisticated, the “tells” that once identified synthetic media are disappearing. We are entering an era where the inability to distinguish between authentic and generated content is, in itself, a tool for destabilization. For now, the public is left to navigate a world where a simple coffee break is no longer enough to prove someone exists.